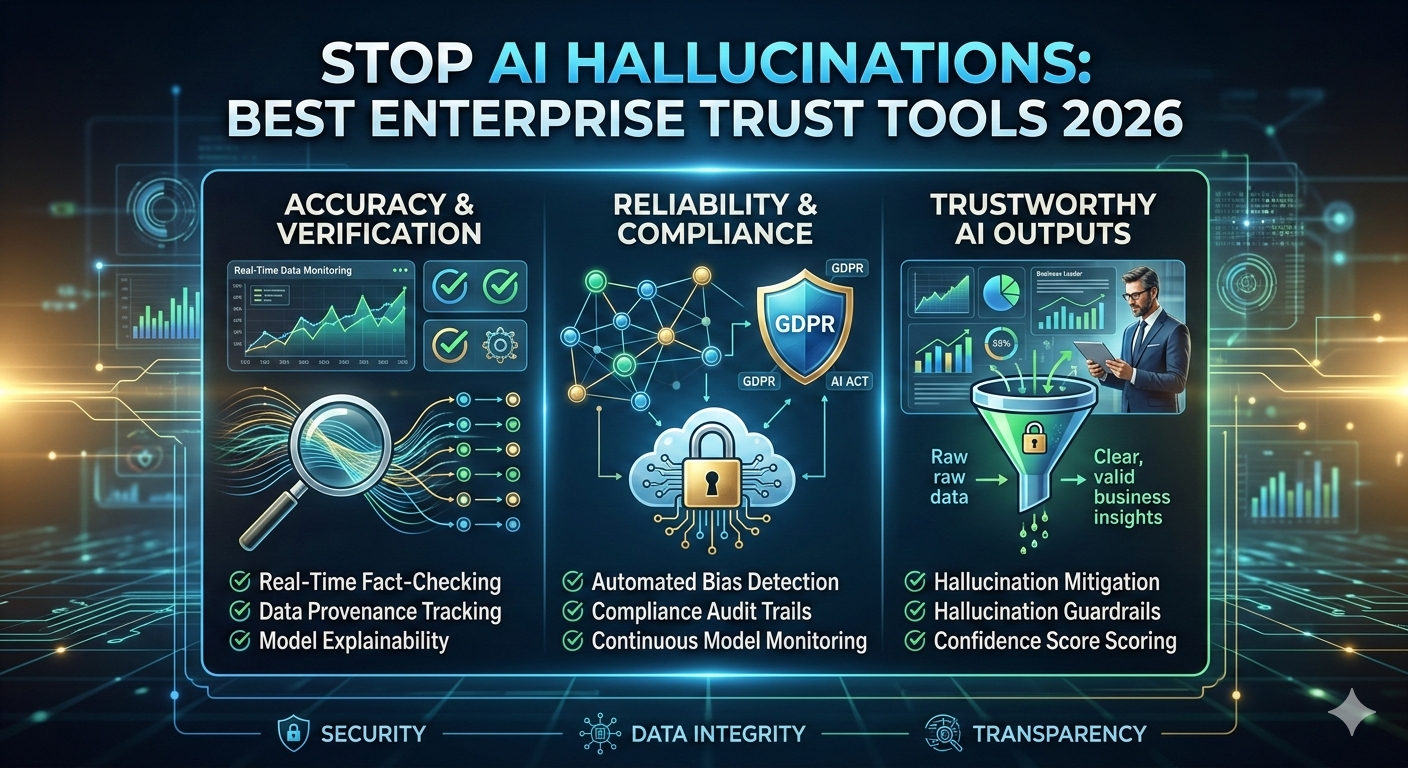

How to Stop AI Hallucinations: Using the Best Enterprise Trust Tools 2026 for Verifiable Intelligence to Prevent AI Misinformation and Ensure Confident Decision Making with Stop AI Hallucinations Best Enterprise

We’re now in the age of the Confident Liar, where big language models (LLMs) have become smarter and faster than ever – but they have one major flaw: they lie with absolute pride, a phenomenon known as a hallucination in the technology world. And it’s a problem we need to solve – we need to stop AI hallucinations using the best enterprise trust tools 2026 for verifiable intelligence. To do this, we need to understand the root cause of AI hallucinations, which is often due to flawed data, incorrect assumptions, or inadequate training. So, let’s stop AI hallucinations using the best enterprise trust tools 2026, such as stop AI hallucinations best enterprise trust tools 2026, which can help prevent this. But, in my experience, it’s not just about the tools, it’s about understanding how they work and how they can be implemented effectively. And, as I’ve learned, it’s essential to have a holistic approach to AI trust, including education, training, and awareness.

This phenomenon is known as a hallucination in the technology world. It’s not an accident; it’s how these models are designed to generate responses quickly and efficiently. But, as a result, they can produce false or misleading information, which can have serious consequences. To stop AI hallucinations, we need the best enterprise trust tools 2026 for verifiable intelligence, such as stop AI hallucinations best enterprise trust tools 2026. Platforms like DataCNTech explain how these systems predict the next word in a sequence. Sometimes, the result is a beautiful poem – but other times, it can generate false financial figures that could cost your organization millions. And this is where the best enterprise trust tools 2026 for verifiable intelligence come in to stop AI hallucinations. And, in my opinion, it’s crucial to have a deep understanding of the AI models and their limitations to avoid such traps. So, it’s essential to have a customer-centric approach to AI development, focusing on transparency, explainability, and accountability.

But what’s changed? Two years ago, an AI lie was just a funny meme. Now, in 2026, it can become a regulatory nightmare – which is why we need to stop AI hallucinations using the best enterprise trust tools 2026 for verifiable intelligence. Boards of directors now require verifiable AI, demanding evidence that the models tell the truth. And this is where the best enterprise trust tools 2026 for verifiable intelligence come in to stop AI hallucinations. So, it’s crucial that we use these tools to prevent such nightmares. And, as I’ve seen, it’s not just about the technology, but also about the human factor, including the need for transparency, explainability, and accountability in AI decision-making. But, we can’t just rely on technology; we need to have a holistic approach to AI trust, including education, training, and awareness.

This is why AI observability has become a popular field in technology – and to stop AI hallucinations, we need the best enterprise trust tools 2026 for verifiable intelligence. Organizations no longer just buy models; they also purchase tools to monitor them, such as stop AI hallucinations best enterprise trust tools 2026. We need a “cyber nanny” to oversee the smartest systems on earth – which can be achieved with the help of stop AI hallucinations best enterprise trust tools 2026. And, as I’ve learned from my experience, it’s essential to have a holistic approach to AI observability, including monitoring, logging, and analytics. But, it’s not just about the tools; it’s about understanding how they work and how they can be implemented effectively. So, it’s crucial to have a deep understanding of the AI models and their limitations to avoid such traps.

The New Face of the Hallucination Crisis and How to Stop AI Hallucinations Best Enterprise Trust Tools 2026

By the end of 2025, AI will have changed. Older models were often historically inaccurate – but newer “reasoning” models, such as the o3-series or Gemini-0.2, are more subtle. They don’t confuse simple facts – but they struggle with contextual hallucinations, which can be stopped using the best enterprise trust tools 2026 for verifiable intelligence, such as stop AI hallucinations best enterprise trust tools 2026. Contextual hallucination occurs when AI knows the facts – but fails to apply logic. And this is where the stop AI hallucinations best enterprise trust tools 2026 come in. But, in my experience, it’s not just about the models, but also about the data they’re trained on, and how that data is curated and managed. So, it’s essential to have a customer-centric approach to AI development, focusing on transparency, explainability, and accountability.

Contextual hallucination occurs when AI knows the facts – but fails to apply logic. It can summarize a 50-page agreement – then hallucinate about a single clause that doesn’t exist. But this is extremely dangerous – as it sounds professional and matches the tone. So, we need to stop AI hallucinations using the best enterprise trust tools 2026 for verifiable intelligence, such as stop AI hallucinations best enterprise trust tools 2026. I call this the Trap of Reasoning – and to avoid it, we need to use stop AI hallucinations best enterprise trust tools 2026. And, as I’ve seen, it’s crucial to have a deep understanding of the AI models and their limitations to avoid such traps. But, we can’t just rely on technology; we need to have a holistic approach to AI trust, including education, training, and awareness.

Businesses are experiencing this through agency AI systems – which can perform tasks like booking flights or transferring money between accounts. And to stop AI hallucinations, we need the best enterprise trust tools 2026 for verifiable intelligence. If an agent assumes permission it doesn’t have – the consequences are immense. But we’ve shifted from chatbots to do-bots – and there’s no room for error. According to Gartner, AI will be used to enhance customer experience – but to achieve this, we need to stop AI hallucinations using the best enterprise trust tools 2026 for verifiable intelligence. And, in my opinion, it’s essential to have a customer-centric approach to AI development, focusing on transparency, explainability, and accountability. But, it’s not just about the technology; it’s about understanding how it works and how it can be implemented effectively.

Relevant Section Title

So, what can we do? To combat the Confident Liar, you need a special set of tools – such as stop AI hallucinations best enterprise trust tools 2026 for verifiable intelligence. Traditional software monitoring isn’t enough. You can’t just verify that the server is online – you need to ensure the output is true. And this is where the stop AI hallucinations best enterprise trust tools 2026 come in. But, as I’ve learned, it’s not just about the tools, but also about the people and processes involved in AI development and deployment. We need to have a holistic approach to AI trust, including education, training, and awareness. And, as I’ve seen, it’s essential to stay up-to-date with the latest research and developments in AI hallucinations, including the latest tools and techniques for detection and prevention.

Hallucination Killers: The Best Options in 2026 to Stop AI Hallucinations

The following platforms are the dominant players in the war on AI hallucinations in 2026 – and to stop AI hallucinations, we need the best enterprise trust tools 2026 for verifiable intelligence. For more information on AI hallucinations, you can visit Microsoft Research. And, as I’ve seen, it’s essential to have a deep understanding of the AI models and their limitations to avoid such traps. But, it’s not just about the technology; it’s about understanding how it works and how it can be implemented effectively. So, it’s crucial to have a customer-centric approach to AI development, focusing on transparency, explainability, and accountability.

Galileo AI: The Real-Time Shield to Stop AI Hallucinations

Galileo AI leads in runtime protection – and to stop AI hallucinations, we need the best enterprise trust tools 2026 for verifiable intelligence, such as stop AI hallucinations best enterprise trust tools 2026. Their Luna-2 engine acts as a real-time filter between the AI and the user – analyzing each response in under 200 milliseconds. If the AI starts to hallucinate – Galileo prevents the user from seeing the message. And this is where the stop AI hallucinations best enterprise trust tools 2026 come in. But, as I’ve learned, it’s not just about the technology, but also about the human factor, including the need for transparency, explainability, and accountability in AI decision-making. And, in my opinion, it’s essential to have a holistic approach to AI trust, including education, training, and awareness.

But how does it work? Galileo applies chain-of-thought analysis to detect lies – verifying the reasoning behind the AI’s answer. If the logic is inconsistent – it’s flagged. And to learn more about chain-of-thought analysis, you can visit IBM Cloud. But, it’s not just about the technology; it’s about understanding how it works and how it can be implemented effectively. So, it’s crucial to have a deep understanding of the AI models and their limitations to avoid such traps. And, as I’ve seen, it’s essential to stay up-to-date with the latest research and developments in AI hallucinations, including the latest tools and techniques for detection and prevention.